Maths and Evolutionary Biology Mathematics is often utilised across many fields - lets look at an example from biology, evolutionary biology and paleontology, in trying to understand the development of homo-sapiens. We can start with a large data set which gives us the data for mammal body mass and brain size in grams (downloaded from... Continue Reading →

Time dependent gravity exploration

Time dependent gravity exploration In our universe we have a gravitational constant - i.e gravity is not dependent on time. If gravity changed with respect to time then the gravitational force exerted by the Sun on Earth would lessen (or increase) over time with all other factors remaining the same. Interestingly time-dependent gravity was first... Continue Reading →

The Holy Grail of Maths: Langlands. (specialization vs generalization).

https://www.youtube.com/watch?v=4dyytPboqvE This year's TOK question for Mathematics is the following: "How can we reconcile the opposing demands for specialization and generalization in the production of knowledge? Discuss with reference to mathematics and one other area of knowledge" This is a nice chance to discuss the Langlands program which was recently covered in a really excellent... Continue Reading →

The Monty Hall Problem – Extended!

https://www.youtube.com/watch?v=mhlc7peGlGg A brief summary of the Monty Hall problem. There are 3 doors. Behind 2 doors are goats and behind 1 door is a car. You choose a door at random. The host then opens another door to reveal a goat. Should you stick with your original choice or swap to the other unopened door?... Continue Reading →

The Perfect Rugby Kick

https://www.youtube.com/watch?v=rHdYv62F5fs The Perfect Rugby Kick This was inspired by the ever excellent Numberphile video which looked at this problem from the perspective of Geogebra. I thought I would look at the algebra behind this. In rugby we have the situation that when a try is scored, there is an additional kick (conversion kick) which can... Continue Reading →

What is the average distance between 2 points in a rectangle?

What is the average distance between 2 points in a rectangle? Say we have a rectangle, and choose any 2 random points within it. We then could calculate the distance between the 2 points. If we do this a large number of times, what would the average distance between the 2 points be? Monte Carlo... Continue Reading →

The Barnsley Fern: Mathematical Art

The Barnsley Fern: Mathematical Art This pattern of a fern pictured above was generated by a simple iterative program designed by mathematician Michael Barnsely. I downloaded the Python code from the excellent Tutorialspoint and then modified it slightly to run on repl.it. What we are seeing is the result of 40,000 individual points - each plotted... Continue Reading →

Galileo’s Inclined Planes

Galileo's Inclined Planes This post is based on the maths and ideas of Hahn's Calculus in Context - which is probably the best mathematics book I've read in 20 years of studying and teaching mathematics. Highly recommended for both students and teachers! Hahn talks us though the mathematics, experiments and thought process of Galileo as... Continue Reading →

Time dependent gravity and cosmology!

Time dependent gravity and cosmology! In our universe we have a gravitational constant - i.e gravity is not dependent on time. If gravity changed with respect to time then the gravitational force exerted by the Sun on Earth would lessen (or increase) over time with all other factors remaining the same. Interestingly time-dependent gravity was... Continue Reading →

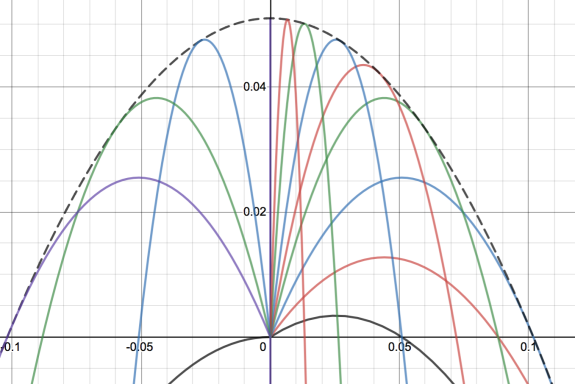

Envelope of projectile motion

Envelope of projectile motion For any given launch angle and for a fixed initial velocity we will get projectile motion. In the graph above I have changed the launch angle to generate different quadratics. The black dotted line is then called the envelope of all these lines, and is the boundary line formed when I... Continue Reading →

Modelling the spread of Coronavirus (COVID-19)

Using Maths to model the spread of Coronavirus (COVID-19) This coronavirus is the latest virus to warrant global fears over a disease pandemic. Throughout history we have seen pandemic diseases such as the Black Death in Middle Ages Europe and the Spanish Flu at the beginning of the 20th century. More recently we have seen... Continue Reading →

Waging war with maths: Hollow squares

Waging war with maths: Hollow squares The picture above [US National Archives, Wikipedia] shows an example of the hollow square infantry formation which was used in wars over several hundred years. The idea was to have an outer square of men, with an inner empty square. This then allowed the men in the formation to... Continue Reading →

Normal Numbers – and random number generators

https://www.youtube.com/watch?v=5TkIe60y2GI Normal Numbers - and random number generators Numberphile have a nice new video where Matt Parker discusses all different types of numbers - including "normal numbers". Normal numbers are defined as irrational numbers for which the probability of choosing any given 1 digit number is the same, the probability of choosing any given 2... Continue Reading →

Volume optimization of a cuboid

Volume optimization of a cuboid This is an extension of the Nrich task which is currently live - where students have to find the maximum volume of a cuboid formed by cutting squares of size x from each corner of a 20 x 20 piece of paper. I'm going to use an n x 10 rectangle... Continue Reading →

Zeno’s Paradox – Achilles and the Tortoise

http://www.youtube.com/watch?v=skM37PcZmWE Zeno's Paradox - Achilles and the Tortoise This is a very famous paradox from the Greek philosopher Zeno - who argued that a runner (Achilles) who constantly halved the distance between himself and a tortoise would never actually catch the tortoise. The video above explains the concept. There are two slightly different versions to... Continue Reading →

Fourier Transforms – the most important tool in mathematics?

Fourier Transform The Fourier Transform and the associated Fourier series is one of the most important mathematical tools in physics. Physicist Lord Kelvin remarked in 1867: "Fourier's theorem is not only one of the most beautiful results of modern analysis, but it may be said to furnish an indispensable instrument in the treatment of nearly... Continue Reading →

Non Euclidean Geometry – An Introduction

Non Euclidean Geometry - An Introduction It wouldn't be an exaggeration to describe the development of non-Euclidean geometry in the 19th Century as one of the most profound mathematical achievements of the last 2000 years. Ever since Euclid (c. 330-275BC) included in his geometrical proofs an assumption (postulate) about parallel lines, mathematicians had been trying... Continue Reading →

World Cup Maths: How to take a perfect penalty

Statistics to win penalty shoot-outs With the World Cup upon us again we can perhaps look forward to yet another heroic defeat on penalties by England. England are in fact the worst country of any of the major footballing nations at taking penalties, having won only 1 out of 7 shoot-outs at the Euros and... Continue Reading →

Modelling more Chaos

Modelling more Chaos This post was inspired by Rachel Thomas' Nrich article on the same topic. I'll carry on the investigation suggested in the article. We're going to explore chaotic behavior - where small changes to initial conditions lead to widely different outcomes. Chaotic behavior is what makes modelling (say) weather patterns so complex. f(x)... Continue Reading →

Modelling Chaos

Modelling Chaos This post was inspired by Rachel Thomas' Nrich article on the same topic. I'll carry on the investigation suggested in the article. We're going to explore chaotic behavior - where small changes to initial conditions lead to widely different outcomes. Chaotic behavior is what makes modelling (say) weather patterns so complex. Let's start... Continue Reading →

The Folium of Descartes

The Folium of Descartes The folium of Descartes is a famous curve named after the French philosopher and mathematician Rene Descartes (pictured top right). As well as significant contributions to philosophy ("I think therefore I am") he was also the father of modern geometry through the development of the x,y coordinate system of plotting algebraic... Continue Reading →

Project Euler: Coding to Solve Maths Problems

Project Euler: Coding to Solve Maths Problems Project Euler, named after one of the greatest mathematicians of all time, has been designed to bring together the twin disciplines of mathematics and coding. Computers are now become ever more integral in the field of mathematics - and now creative coding can be a method of solving... Continue Reading →

Spotting Asset Bubbles

Spotting Asset Bubbles Asset bubbles are formed when a service, product or company becomes massively over-valued only to crash, taking with it most of its investors' money. There are many examples of asset bubbles in history - the Dutch tulip bulb mania and the South Sea bubble are two of the most famous historical examples.... Continue Reading →

The Remarkable Dirac Delta Function

The Remarkable Dirac Delta Function This is a brief introduction to the Dirac Delta function - named after the legendary Nobel prize winning physicist Paul Dirac. Dirac was one of the founding fathers of the mathematics of quantum mechanics, and is widely regarded as one of the most influential physicists of the 20th Century. This... Continue Reading →

The Rise of Bitcoin

The Rise of Bitcoin Bitcoin is in the news again as it hits $10,000 a coin - the online crypto-currency has seen huge growth over the past 1 1/2 years, and there are now reports that hedge funds are now investing part of their portfolios in the currency. So let's have a look at... Continue Reading →

Modeling with springs and weights

This is a quick example of how using Tracker software can generate a nice physics-related exploration. I took a spring, and attached it to a stand with a weight hanging from the end. I then took a video of the movement of the spring, and then uploaded this to Tracker. Height against time The first... Continue Reading →

Cracking ISBN and Credit Card Codes

Cracking ISBN and Credit Card Codes ISBN codes are used on all books published worldwide. It’s a very powerful and useful code, because it has been designed so that if you enter the wrong ISBN code the computer will immediately know – so that you don’t end up with the wrong book. There is lots... Continue Reading →

NASA, Aliens and Codes in the Sky

NASA, Aliens and Binary Codes from the Star The Drake Equation was intended by astronomer Frank Drake to spark a dialogue about the odds of intelligent life on other planets. He was one of the founding members of SETI - the Search for Extra Terrestrial Intelligence - which has spent the past 50 years scanning... Continue Reading →

Bedford’s Law to catch fraudsters

http://www.youtube.com/watch?v=vIsDjbhbADY Benford's Law - Using Maths to Catch Fraudsters Benford's Law is a very powerful and counter-intuitive mathematical rule which determines the distribution of leading digits (ie the first digit in any number). You would probably expect that distribution would be equal - that a number 9 occurs as often as a number 1. But... Continue Reading →

Simulating Traffic Jams and Asteroids

Simulations -Traffic Jams and Asteroid Impacts Why do traffic jams form? How does the speed limit or traffic lights or the number of lorries on the road affect road conditions? You can run a number of different simulations - looking at ring road traffic, lane closures and how robust the system is by applying an... Continue Reading →

Even Pigeons Can Do Maths

Even Pigeons Can Do Maths This is a really interesting study from a couple of years ago, which shows that even pigeons can deal with numbers as abstract quantities - in the study the pigeons counted groups of objects in their head and then classified the groups in terms of size. From the New York... Continue Reading →

Maths of Global Warming – Modeling Climate Change

Maths of Global Warming - Modeling Climate Change The above graph is from NASA's climate change site, and was compiled from analysis of ice core data. Scientists from the National Oceanic and Atmospheric Administration (NOAA) drilled into thick polar ice and then looked at the carbon content of air trapped in small bubbles in the... Continue Reading →

Modelling Radioactive Decay

Modelling Radioactive decay We can model radioactive decay of atoms using the following equation: N(t) = N0 e-λt Where: N0: is the initial quantity of the element λ: is the radioactive decay constant t: is time N(t): is the quantity of the element remaining after time t. So, for Carbon-14 which has a half life of... Continue Reading →

Could Trump be the next President of America?

Could Trump be the next President of America? There is a lot of statistical maths behind polling data to make it as accurate as possible - though poor sampling techniques can lead to unexpected results. For example in the UK 2015 general election even though labour were predicted to win around 37.5% of the... Continue Reading →

The Gini Coefficient – Measuring Inequality

Cartoon from here The Gini Coefficient - Measuring Inequality The Gini coefficient is a value ranging from 0 to 1 which measures inequality. 0 represents perfect equality - i.e everyone in a population has exactly the same wealth. 1 represents complete inequality - i.e 1 person has all the wealth and everyone else has nothing.... Continue Reading →

The Monkey and the Hunter – How to Shoot a Monkey

This is a classic puzzle which is discussed in some more detail by the excellent Wired article. The puzzle is best represented by the picture below. We have a hunter who whilst in the jungle stumbles across a monkey on a tree branch. However he knows that the monkey, being clever, will drop from the branch... Continue Reading →

How to Design a Parachute

https://www.youtube.com/watch?v=FHtvDA0W34I How to Design a Parachute This post is also inspired by the excellent book by Robert Banks – Towing Icebergs. This book would make a great investment if you want some novel ideas for a maths investigation. The challenge is to design a parachute with a big enough area to make sure that someone... Continue Reading →

Galileo: Throwing cannonballs off The Leaning Tower of Pisa

https://www.youtube.com/watch?v=5C5_dOEyAfk Galileo: Throwing cannonballs off The Leaning Tower of Pisa This post is inspired by the excellent book by Robert Banks - Towing Icebergs. This book would make a great investment if you want some novel ideas for a maths investigation. Galileo Galilei was an Italian mathematician and astronomer who (reputedly) conducted experiments from the... Continue Reading →

The Coastline Paradox and Fractional Dimensions

https://www.youtube.com/watch?v=7dcDuVyzb8Y The Coastline Paradox and Fractional Dimensions The coastline paradox arises from the difficulty of measuring shapes with complicated edges such as those of countries like the Britain. As we try and be ever more accurate in our measurement of the British coastline, we get an ever larger answer! We can see this demonstrated below:... Continue Reading →

How to Win at Rock, Paper, Scissors

https://www.youtube.com/watch?v=rudzYPHuewc How to Win at Rock, Paper, Scissors You might think that winning at rock, paper, scissors was purely a matter of chance - after all mathematically each outcome has the same probability. We can express the likelihood of winning in terms of a game theory grid: It is clear that in theory you would... Continue Reading →

Elliptical Curve Cryptography

https://www.youtube.com/watch?v=ulg_AHBOIQU Elliptical Curve Cryptography This post builds on some of the ideas in the previous post on elliptical curves. This blog originally appeared in a Plus Maths article I wrote here. The excellent Numberphile video above expands on some of the ideas below. On a (slightly simplified) level elliptical curves they can be regarded as... Continue Reading →

Elliptical Curves

Elliptical Curves Elliptical curves are a very important new area of mathematics which have been greatly explored over the past few decades. They have shown tremendous potential as a tool for solving complicated number problems and also for use in cryptography. (This blog is based on the article I wrote for Plus Maths here). Andrew... Continue Reading →

How to use Statistics to win on Penalties

Statistics to win penalty shoot-outs The last World Cup was a relatively rare one for England, with no heroic defeat on penalties, as normally seems to happen. England are in fact the worst country of any of the major footballing nations at taking penalties, having won only 1 out of 6 shoot-outs at the Euros... Continue Reading →

Hyperbolic Geometry

Hyperbolic Geometry The usual geometry taught in school is that of Euclidean geometry - in which angles in a triangle add up 180 degrees. This is based on the idea that the underlying space on which the triangle is drawn is flat. However, if the underlying space in curved then this will no longer be... Continue Reading →

Plotting Stewie Griffin from Family Guy

Plotting Stewie Griffin from Family Guy Computer aided design gets ever more important in jobs - and with graphing software we can create art using maths functions. For example the above graph was created by a user, Kara Blanchard on Desmos. You can see the original graph here, by clicking on each part of the... Continue Reading →

Modeling Volcanoes – When will they erupt?

Modeling Volcanoes - When will they erupt? A recent post by the excellent Maths Careers website looked at how we can model volcanic eruptions mathematically. This is an important branch of mathematics - which looks to assign risk to events and these methods are very important to statisticians and insurers. Given that large-scale volcanic eruptions... Continue Reading →

Mandelbrot and Julia Sets – Pictures of Infinity

https://www.youtube.com/watch?v=0jGaio87u3A Mandelbrot and Julia Sets - Pictures of Infinity The above video is of a Mandelbrot zoom. This is a infinitely large picture - which contains fractal patterns no matter how far you enlarge it. To put this video in perspective, it would be like starting with a piece of A4 paper and enlarging it... Continue Reading →

Tetrahedral Numbers – Stacking Cannonballs

Tetrahedral Numbers - Stacking Cannonballs This is one of those deceptively simple topics which actually contains a lot of mathematics - and it involves how spheres can be stacked, and how they can be stacked most efficiently. Starting off with the basics we can explore the sequence: 1, 4, 10, 20, 35, 56.... These are... Continue Reading →

Making Music With Sine Waves

Making Music With Sine Waves Sine and cosine waves are incredibly important for understanding all sorts of waves in physics. Musical notes can be thought of in terms of sine curves where we have the basic formula: y = sin(bt) where t is measured in seconds. b is then connected to the period of the... Continue Reading →

Surviving the Zombie Apocalypse

Surviving the Zombie Apocalypse This is part 2 in the maths behind zombies series. See part 1 here We have previously looked at how the paper from mathematicians from Ottawa University discuss the mathematics behind surviving the zombie apocalypse - and how the mathematics used has many other modelling applications - for understanding the spread... Continue Reading →