Aliquot sequence: An unsolved problem At school students get used to the idea that we know all the answers in mathematics - but the aliquot sequence is a simple example of an unsolved problem in mathematics. The code above (if run for long enough on a super-computer!) might be enough to disprove a conjecture about... Continue Reading →

Winning at Snakes and Ladders

https://www.youtube.com/watch?v=nlm07asSU0c Winning at Snakes and Ladders The fantastic Marcus de Sautoy has just made a video on how to use Markov chains to work out how long it will take to win at Snakes and Ladders. This uses a different method to those I've explored before (Playing Games with Markov Chains) so it's well worth... Continue Reading →

Roll or bust? A strategy for dice games

Roll or bust? A strategy for dice games Let's explore some strategies for getting the best outcome for some dice games. Game 1: 1 dice, bust on 1. We roll 1 dice. However we can roll as many times as we like and add the score each time. We can choose to stop when we... Continue Reading →

GPT-4 vs ChatGPT. The beginning of an intelligence revolution?

GPT-4 vs ChatGPT. The beginning of an intelligence revolution? The above graph (image source) is one of the most incredible bar charts you’ll ever see – this is measuring the capabilities of GPT4, Open AI’s new large language model with its previous iteration, ChatGPT. As we can see, GPT4 is now able to score in... Continue Reading →

Creating a Neural Network: AI Machine Learning

Creating a Neural Network: AI Machine Learning A neural network is a type of machine learning algorithm modeled after the structure and function of the human brain. It is composed of a large number of interconnected "neurons," which are organized into layers. These layers are responsible for processing and transforming the input data and passing... Continue Reading →

Can Artificial Intelligence (Chat GPT) get a 7 on an SL Maths paper?

Can Artificial Intelligence (Chat GPT) Get a 7 on an SL Maths paper? ChatGPT is a large language model that was trained using machine learning techniques. One of the standout features of ChatGPT is its mathematical abilities. It can perform a variety of calculations and solve equations. This advanced capability is made possible by the... Continue Reading →

The mathematics behind blockchain, bitcoin and NFTs

The mathematics behind blockchain, bitcoin and NFTs. If you've ever wondered about the maths underpinning cryptocurrencies and NFTs, then here I'm going to try and work through the basic idea behind the Elliptic Curve Digital Signature Algorithm (ECDSA). Once you understand this idea you can (in theory!) create your own digital currency or NFT -... Continue Reading →

Finding the average distance in a polygon

Finding the average distance in a polygon Over the previous couple of posts I've looked at the average distance in squares, rectangles and equilateral triangles. The logical extension to this is to consider a regular polygon with sides 1. Above is pictured a regular pentagon with sides 1 enclosed in a 2 by 2 square. ... Continue Reading →

Finding the average distance in an equilateral triangle

Finding the average distance in an equilateral triangle In the previous post I looked at the average distance between 2 points in a rectangle. In this post I will investigate the average distance between 2 randomly chosen points in an equilateral triangle. Drawing a sketch. The first step is to start with an equilateral triangle... Continue Reading →

What is the average distance between 2 points in a rectangle?

What is the average distance between 2 points in a rectangle? Say we have a rectangle, and choose any 2 random points within it. We then could calculate the distance between the 2 points. If we do this a large number of times, what would the average distance between the 2 points be? Monte Carlo... Continue Reading →

Plotting Pi and Searching for Mona Lisa

https://www.youtube.com/watch?v=tkC1HHuuk7c Plotting Pi and Searching for Mona Lisa This is a very nice video from Numberphile - where they use a string of numbers (pi) to write a quick Python Turtle code to create some nice graphical representations of pi. I thought I'd quickly go through the steps required for people to do this by... Continue Reading →

Witness Numbers: Finding Primes

https://www.youtube.com/watch?v=_MscGSN5J6o&t=514s Witness Numbers: Finding Primes The Numberphile video above is an excellent introduction to primality tests - where we conduct a test to determine if a number is prime or not. Finding and understanding about prime numbers is an integral part of number theory. I'm going to go through some examples when we take the... Continue Reading →

Maths Games and Markov Chains

Maths Games and Markov Chains This post carries on from the previous one on Markov chains - be sure to read that first if this is a new topic. The image above is of the Russian mathematician Andrey Markov [public domain picture from here] who was the first mathematician to work in this field (in... Continue Reading →

Spotting fake data with Benford’s Law

https://www.youtube.com/watch?v=WHeOrISYWDA Spotting fake data with Benford's Law In the current digital age it's never been easier to fake data - and so it's never been more important to have tools to detect data that has been faked. Benford's Law is an extremely useful way of testing data - because when people fake data they tend... Continue Reading →

Elliptical Curve Cryptography

Elliptical Curve Cryptography Elliptical curves are a very important new area of mathematics which have been greatly explored over the past few decades. They have shown tremendous potential as a tool for solving complicated number problems and also for use in cryptography. Andrew Wiles, who solved one of the most famous maths problems of the... Continue Reading →

Prime Spirals – Patterns in Primes

Prime Spirals - Patterns in Primes One of the fundamental goals of pure mathematicians is gaining a deeper understanding of the distribution of prime numbers - hence why the Riemann Hypothesis is one of the great unsolved problems in number theory and has a $1 million prize for anyone who can solve it. Prime numbers... Continue Reading →

Coding Hailstone Numbers

Hailstone Numbers Hailstone numbers are created by the following rules: if n is even: divide by 2 if n is odd: times by 3 and add 1 We can then generate a sequence from any starting number. For example, starting with 10: 10, 5, 16, 8, 4, 2, 1, 4, 2, 1... we can see... Continue Reading →

Chaos and strange Attractors: Henon’s map

Chaos and strange Attractors: Henon's map Henon's map was created in the 1970s to explore chaotic systems. The general form is created by the iterative formula: The classic case is when a = 1.4 and b = 0.3 i.e: To see how points are generated, let's choose a point near the origin. If we take... Continue Reading →

Finding the average distance between 2 points on a hypercube

Finding the average distance between 2 points on a hypercube This is the natural extension from this previous post which looked at the average distance of 2 randomly chosen points in a square - this time let's explore the average distance in n dimensions. I'm going to investigate what dimensional hypercube is required to have an... Continue Reading →

Generating e through probability and hypercubes

Generating e through probability and hypercubes This is a really beautiful solution to an interesting probability problem posed by fellow IB teacher Daniel Hwang, for which I've outlined a method for solving suggested by Ferenc Beleznay. The problem is as follows: On average, how many random real numbers from 0 to 1 (inclusive) are required... Continue Reading →

Have you got a Super Brain?

Have you got a Super Brain? Adapting and exploring maths challenge problems is an excellent way of finding ideas for IB maths explorations and extended essays. This problem is taken from the book: The first 25 years of the Superbrain challenges. I'm going to see how many different ways I can solve it. The problem... Continue Reading →

Sierpinski Triangle: A picture of infinity

Sierpinski Triangle: A picture of infinity This pattern of a Sierpinski triangle pictured above was generated by a simple iterative program. I made it by modifying the code previously used to plot the Barnsley Fern. You can run the code I used on repl.it. What we are seeing is the result of 30,000 iterations of a simple... Continue Reading →

Sphere packing problem: Pyramid design

Sphere packing problem: Pyramid design Sphere packing problems are a maths problems which have been considered over many centuries - they concern the optimal way of packing spheres so that the wasted space is minimised. You can achieve an average packing density of around 74% when you stack many spheres together, but today I want to... Continue Reading →

Square Triangular Numbers

Square Triangular Numbers Square triangular numbers are numbers which are both square numbers and also triangular numbers - i.e they can be arranged in a square or a triangle. The picture above (source: wikipedia) shows that 36 is both a square number and also a triangular number. The question is how many other square triangular... Continue Reading →

When do 2 squares equal 2 cubes?

When do 2 squares equal 2 cubes? Following on from the hollow square investigation this time I will investigate what numbers can be written as both the sum of 2 squares, 2 cubes and 2 powers of 4. i.e a2+b2 = c3+d3 = e4+f4. Geometrically we can think of this as trying to find an... Continue Reading →

Hollow Cubes and Hypercubes investigation

Hollow Cubes investigation Hollow cubes like the picture above [reference] are an extension of the hollow squares investigation done previously. This time we can imagine a 3 dimensional stack of soldiers, and so try to work out which numbers of soldiers can be arranged into hollow cubes. Therefore what we need to find is what... Continue Reading →

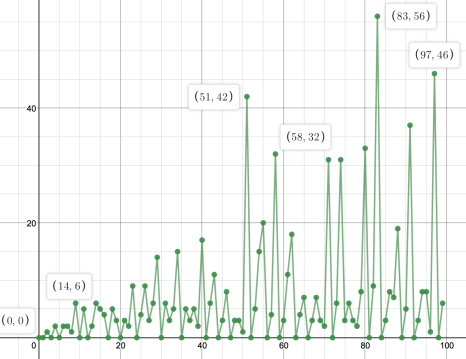

The Van Eck Sequence

https://www.youtube.com/watch?v=etMJxB-igrc The Van Eck Sequence This is a nice sequence as discussed in the Numberphile video above. There are only 2 rules: If you have not seen the number in the sequence before, add a 0 to the sequence. If you have seen the number in the sequence before, count how long since you... Continue Reading →

Solving maths problems using computers

Computers can brute force a lot of simple mathematical problems, so I thought I'd try and write some code to solve some of them. In nearly all these cases there's probably a more elegant way of coding the problem - but these all do the job! You can run all of these with a Python... Continue Reading →

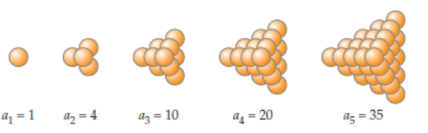

Stacking cannonballs – solving maths with code

https://www.youtube.com/watch?v=q6L06pyt9CA Stacking cannonballs - solving maths with code Numberphile have recently done a video looking at the maths behind stacking cannonballs - so in this post I'll look at the code needed to solve this problem. Triangular based pyramid. A triangular based pyramid would have: 1 ball on the top layer 1 + 3 balls... Continue Reading →

What’s so special about 277777788888899?

https://www.youtube.com/watch?v=Wim9WJeDTHQ What's so special about 277777788888899? Numberphile have just done a nice video which combines mathematics and computer programing. The challenge is to choose any number (say 347) Then we do 3x4x7 = 84 next we do 8x4 = 32 next we do 3x2 = 6. And when we get to a single digit number... Continue Reading →