Anscombe's Quartet - the importance of graphs! Anscombe's Quartet was devised by the statistician Francis Anscombe to illustrate how important it was to not just rely on statistical measures when analyzing data. To do this he created 4 data sets which would produce nearly identical statistical measures. The scatter graphs above generated by the Python... Continue Reading →

Quantum Mechanics – Statistical Universe

https://www.youtube.com/watch?v=fcfQkxwz4Oo Quantum Mechanics - Statistical Universe Quantum mechanics is the name for the mathematics that can describe physical systems on extremely small scales. When we deal with the macroscopic - i.e scales that we experience in our everyday physical world, then Newtonian mechanics works just fine. However on the microscopic level of particles, Newtonian mechanics... Continue Reading →

Modeling Volcanoes – When will they erupt?

Modeling Volcanoes - When will they erupt? A recent post by the excellent Maths Careers website looked at how we can model volcanic eruptions mathematically. This is an important branch of mathematics - which looks to assign risk to events and these methods are very important to statisticians and insurers. Given that large-scale volcanic eruptions... Continue Reading →

Which Times Tables do Students Find Difficult? An Investigation.

Which Times Tables do Students Find Difficult? There's an excellent article on today's Guardian Datablog looking at a computer based study (with 232 primary school students) on which times tables students find easiest and difficult. Edited highlights (Guardian quotes in italics): Which multiplication did students get wrong most often? The hardest multiplication was six times... Continue Reading →

Amanda Knox and Bad Maths in Courts

Amanda Knox and Bad Maths in Courts This post is inspired by the recent BBC News article, "Amanda Knox and Bad Maths in Courts." The article highlights the importance of good mathematical understanding when handling probabilities - and how mistakes by judges and juries can sometimes lead to miscarriages of justice. A scenario to give to... Continue Reading →

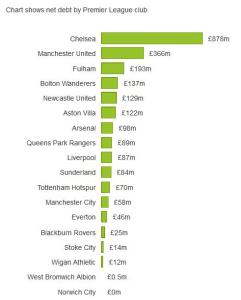

Premier League Finances – Debt and Wages

Premier League Finances - Debt and Wages This is a great article from the Guardian DataBlog analysing the finances for last season's Premier League clubs. As the Guardian says, "More than two thirds of the Premier League's record £2.4bn income in 2011-12 was paid out in wages, according to the most recently published accounts of... Continue Reading →

IB Maths Studies (and IGCSE) Data Handling

This is a really nice worksheet and associated powerpoint for collecting a variety of data from the class - measuring reaction times, memory, head circumference etc etc. Everything is easily laid out ready for students to fill in. Would also be suitable for IGCSE, and even KS3 (you would just interpret to different levels.

Making IB Statistics Relevant

Making Statistics Relevant is a brilliant website from the same creator as the RISPS resources - Jonny Griffiths. Each statistics topic has an extension task created to get students using their problem solving skills. Topics covered include measures of central tendency, probability, discrete random variables, poisson distribution, binomial distribution, normal distribution and chi squared. ... Continue Reading →